AI-generated images are no longer just creative outputs. They are quietly becoming part of the web’s underlying infrastructure—used to populate interfaces, fill content gaps, and automate visual decisions at scale.

This shift matters because infrastructure shapes behavior. When a technology becomes invisible, it also becomes unquestioned.

From Visual Media to System-Level Asset

Traditionally, images were created for a specific purpose: documentation, storytelling, marketing, or art. AI images, by contrast, are often generated as system assets. They exist to serve workflows rather than express reality.

Websites now auto-generate hero images, avatars, thumbnails, and background visuals without human selection. Recommendation engines test multiple visual variants in real time. Some platforms generate images dynamically based on user behavior or location.

In this context, AI images are not content additions—they are functional components of digital systems.

The Metadata Problem No One Sees

One of the biggest technical challenges with AI images is not how they look, but what gets lost when they move across the web.

Image uploads typically strip metadata. Compression pipelines remove identifying markers. Screenshots erase origins entirely. Even when AI-generated images include provenance data or watermarks, most platforms do not preserve them.

Once an image enters circulation, it becomes detached from its creation context. This makes later verification difficult, even for professionals.

As a result, the web treats all images as equal objects, regardless of whether they originated from a camera, a render engine, or a generative model.

Why Detection Is Falling Behind Generation

AI image generation improves through centralized model updates. Detection, however, is fragmented across tools, heuristics, and human judgment.

No single detection method works consistently. Pixel-level analysis can fail on compressed images. Visual inspection depends on training and attention. Contextual verification requires external data that may not exist.

This imbalance creates a moving target. As generators learn from past detection failures, visual cues disappear faster than detection standards can stabilize.

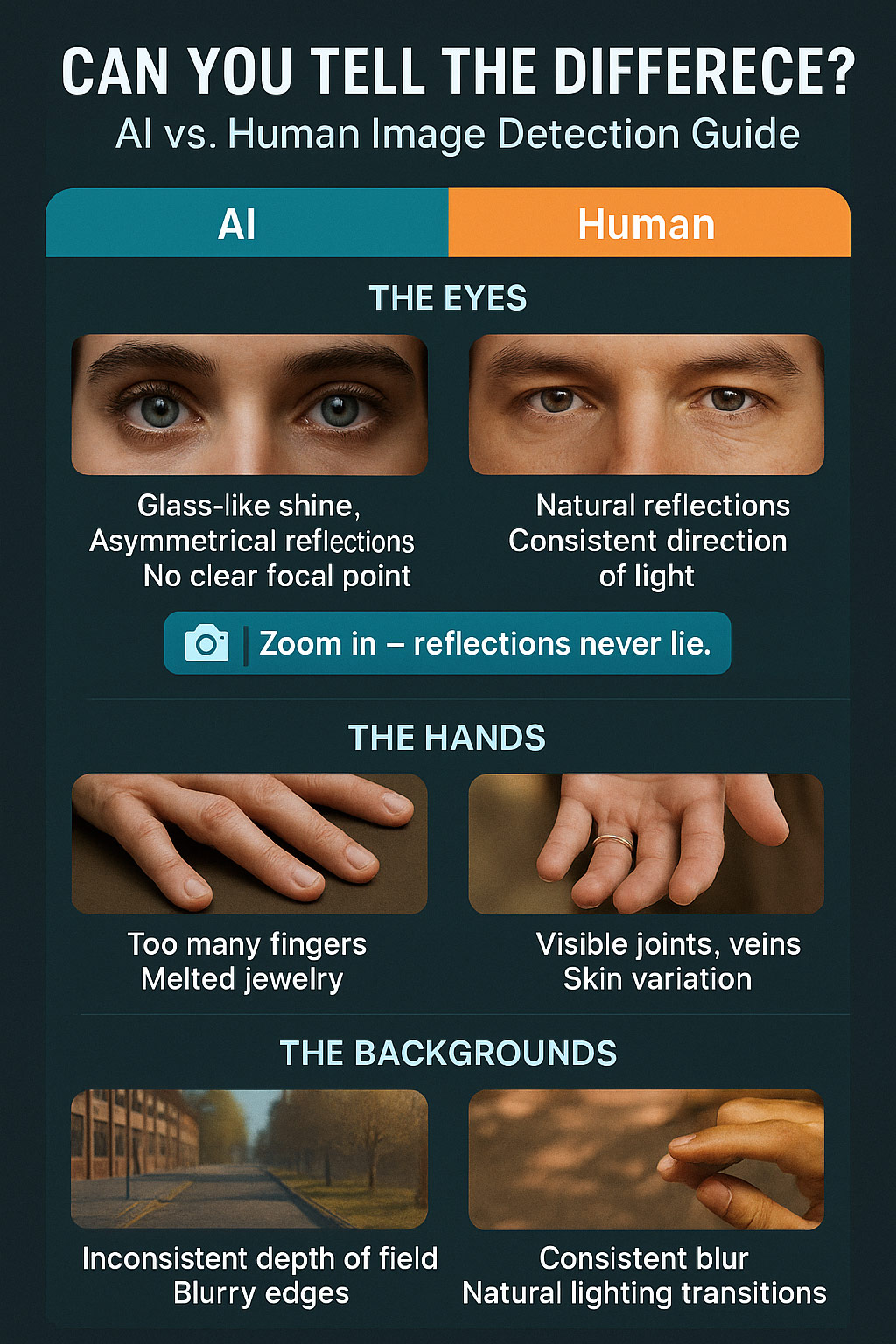

Understanding how modern AI images behave visually and structurally is becoming a necessary skill for navigating digital environments. Practical frameworks such as Learn To Spot AI Images Like A Pro! help users build pattern awareness rather than relying on outdated assumptions.

The Impact on Identity and Representation

AI images are increasingly used to represent people who do not exist. Profile photos, testimonial faces, and “team member” portraits are sometimes generated rather than photographed.

Technically, this is efficient. Ethically, it introduces ambiguity. When identity becomes synthetic by default, representation shifts from documentation to simulation.

This trend forces platforms and users to reconsider what visual identity means online. Is an image meant to verify a person, or merely signal one?

The answer is becoming less clear as AI-generated visuals blend into everyday interfaces.

Standards Are Emerging, But Slowly

There are ongoing efforts to address provenance, including cryptographic signatures and content authenticity frameworks. However, adoption is inconsistent, and enforcement is limited.

Until standards become universal, visual literacy remains the most reliable defense. Not because users should distrust every image, but because the system itself does not provide enough signals.

The rise of AI images is not a temporary disruption. It is a long-term architectural change in how the web produces and consumes visuals.

Learning to Navigate a Synthetic Visual Layer

The internet is adding a synthetic visual layer on top of reality—one that is flexible, scalable, and increasingly indistinguishable from traditional imagery.

Navigating that layer requires new habits. Slower observation. Context awareness. A willingness to question images without assuming malicious intent.

AI images are not the end of trust online. But they do mark the end of blind visual certainty.

Understanding that shift is now part of basic technical literacy on the modern web.